- The Neural Frontier

- Posts

- Google doubles down on AI in its Android Show!

Google doubles down on AI in its Android Show!

Also: Notion is becoming an OS for AI agents, while Amazon unveils a new AI-powered shopping assistant 🛍️.

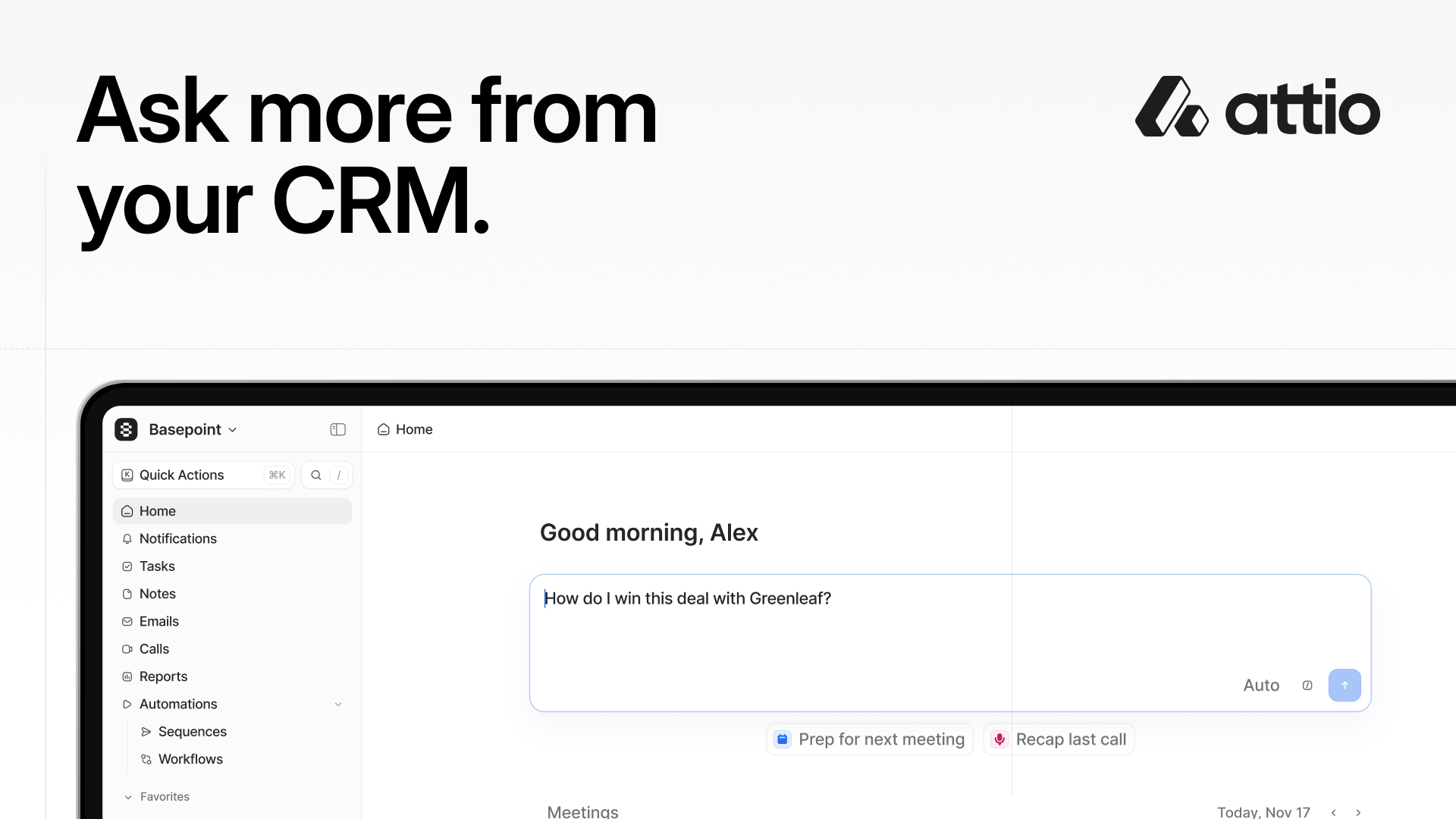

Attio is the AI CRM for high-growth teams.

Connect your email, calls, product data and more, and Attio instantly builds your CRM with enriched data and complete context. Whether you’re running product-led growth or enterprise sales, Attio adapts to your unique GTM motion.

Then Ask Attio to plan your next move.

Run deep web research on prospects. Update your pipeline as you work. Find customers and draft outreach emails. Powered by Universal Context, Attio's intelligence layer, Attio searches, updates, and creates across your data to accelerate your workflow.

Ask more from your CRM.

Spot the trend in the headlines? Yep, everyone’s doubling down on their AI bets.

Forward thinkers, hello and welcome to issue #158 of the Neural Frontier.

If those headlines aren’t enough to get you pumped, we don’t know what will 😏.

Let’s dive into the big moves (or bets?) from Google, Notion, and Amazon.

In a rush? Here's your quick byte:

🤖 Google doubles down on AI in its Android Show!

💻 Notion is becoming an OS for AI agents.

🛒 Amazon unveils a new AI-powered shopping assistant!

⚡ The Neural Frontier’s weekly spotlight: 3 AI tools making the rounds this week.

Source: Google

At its Android Show ahead of I/O 2026, Google unveiled a flood of new Android and Gemini updates, from AI-powered laptops to vibe-coded widgets and more autonomous Gemini features.

The biggest theme across everything? Google wants Gemini embedded into every layer of the Android experience.

💻 Meet Googlebook

Google officially introduced Googlebook, a new line of laptops built around Gemini Intelligence from the ground up.

The devices, launching this fall with partners like Acer, Asus, Dell, HP, and Lenovo, will include:

“Magic Pointer,” a Gemini-powered cursor

Deep Android phone integration

AI-generated custom widgets

More proactive Gemini assistance across workflows

It’s basically Google’s clearest attempt yet to create an AI-native computing experience that rivals what OpenAI, Microsoft, and Apple are increasingly moving toward.

🤖 Gemini is becoming more agentic across Android

A lot of the announcements focused less on “chatbot AI” and more on AI that actually does things.

Google showed Gemini taking information from one app and completing tasks across others, building shopping carts from grocery lists, finding events from photos of flyers, and navigating websites and booking things automatically through Chrome.

Android users are also getting Gemini in Chrome, including experimental auto-browsing features that can complete actions on your behalf.

This puts Google much closer to the broader industry shift toward AI agents becoming the interface itself.

🎨 Android is getting more customizable, too

One of the more interesting launches was Create My Widget, which lets users “vibe-code” custom Android widgets using natural language prompts.

Example: “Suggest three high-protein meal prep recipes every week.”

Gemini then generates a functional widget dashboard for your home screen automatically.

Google also announced:

Refreshed 3D emojis

New creator tools for recording screen + face reactions simultaneously

Better Instagram integrations for Android cameras

“Rambler,” a smarter Gboard dictation system that cleans up filler words automatically

🚗 Android Auto is becoming a lot smarter

Google is also heavily upgrading Android Auto with personalized widgets, Gemini voice assistance, better media interfaces, and full HD video playback in supported vehicles later this year.

Drivers will even be able to place food orders hands-free through integrations like DoorDash.

The bigger takeaway? Last year’s Android announcements were mostly: “here’s AI inside Android.”

And this year feels more like: “Android itself is becoming an AI operating system.”

Google is clearly trying to make Gemini proactive instead of reactive, embedded instead of separate, and agentic instead of conversational.

And with OpenAI, Anthropic, Microsoft, and Perplexity all racing toward AI-native operating systems and agents, this feels like Google fully signaling that Android is now one of the company’s biggest AI battlegrounds.

Source: Notion

Notion Labs just made its biggest AI move yet, and it’s much bigger than note-taking.

At a livestreamed event, the company unveiled a new developer platform designed to turn Notion into a central hub where:

AI agents

live company data

workflows

and custom automation

…can all work together inside the same workspace.

The announcement pushes Notion deeper into the rapidly growing “agentic AI” race, where companies are trying to build systems that actually coordinate and execute work.

⚙️ What’s new?

The biggest addition is something called Workers, a cloud-based sandbox where teams can deploy custom code directly inside Notion.

That means companies can now sync external databases into Notion, trigger workflows with webhooks, build custom tools and logic, connect AI agents to internal systems, and automate multistep tasks across apps.

And notably, you don’t even necessarily need to write the code yourself. Notion explicitly says AI coding agents can generate and deploy much of this logic for users.

🤖 Notion now supports external AI agents too

Previously, Notion’s Custom Agents mostly operated inside the Notion ecosystem. Now the company is opening the doors much wider.

Users can:

chat directly with external AI agents

assign them work inside Notion

monitor progress as if they were native teammates

Supported launch partners include Cursor, Anthropic Claude Code, OpenAI Codex, and Decagon AI.

There’s also a new External Agent API for companies that want to connect their own internal agents to Notion workflows.

📊 Live data becomes part of the workspace

One of the more important updates is database syncing. Notion can now continuously pull in live information from external systems like Salesforce, Zendesk, Postgres, and other API-connected databases.

CEO Ivan Zhao described it as turning: “your Notion database into a shared canvas powering both workflows and agents.” That’s a meaningful shift.

Instead of static docs and wikis, Notion increasingly wants to become a live operational layer for enterprise AI systems.

Overall, this announcement moves Notion closer to competing with workflow automation platforms, internal tooling systems, orchestration layers, and even lightweight enterprise operating systems.

Source: Amazon

Amazon just launched “Alexa for Shopping,” a new AI-powered assistant that turns the search bar itself into a personalized shopping agent.

And unlike Rufus, Amazon’s earlier AI shopping chatbot, this version is designed to do much more than answer product questions.

It wants to learn your habits, automate purchases, track prices, shop across other retailers, and eventually handle parts of shopping for you.

🤖 What Alexa for Shopping can do

The assistant is powered by Alexa+ and is now rolling out to U.S. customers across mobile, desktop, and Echo Show devices. Users can ask conversational questions like: “What’s a good skincare routine for men?” or “When did I last order AA batteries?”

But the bigger shift is how proactive the system is becoming. Alexa for Shopping can:

compare products

generate shopping guides

schedule recurring purchases

monitor prices

automatically add items to your cart when conditions are met

Example:

“Add this sunscreen to my cart if it drops to $10.” That’s less “AI search” and more automated purchasing infrastructure.

🌐 Amazon also wants the assistant to shop outside Amazon

One of the more ambitious additions is Amazon’s “Buy for Me” feature. If a product isn’t available on Amazon itself, the assistant can:

search other online stores

navigate external retailers

and complete purchases on your behalf

That’s a pretty major escalation in the broader AI-agent race. Because increasingly, the battleground isn’t which chatbot gives the best answer, but which AI system actually executes tasks end-to-end.

This launch makes it even clearer that Amazon sees AI as the future operating layer for commerce.

Not just product recommendations or customer support, but:

purchasing

replenishment

comparison shopping

and decision-making itself

And unlike many AI companies still experimenting with consumer monetization, Amazon already owns payments, fulfillment, logistics, shopping history, subscriptions, and delivery infrastructure.

This gives it a huge advantage in turning AI agents into actual commercial behavior.

⚡ The Neural Frontier’s weekly spotlight: 3 AI tools making the rounds this week.

1. 🧑💻 Open Vibe is a free, open-source toolkit that turns AI coding agents like Claude Code into interactive SaaS tutors, so you actually understand what you're building as you build it.

2. 🎮 ContentPilots is an AI clipping tool that turns full-length Twitch, Kick, and YouTube streams into ready-to-post vertical clips for Shorts, Reels, and TikTok.

3. 💬 Hoogly is an AI-powered employee engagement platform that replaces static surveys with real conversations, surfacing honest team sentiment and turning it into actionable leadership insights.

Wrapping up…

Last week, we had a LOT of drama. And this week? We’ve had a ton of product releases. That’s pretty much how the pendulum swings these days.

Not that we’re complaining 😁, of course.

As always, we’ll be on the frontlines, scouting for the latest and greatest in the AI space, and bringing it straight to your inbox.

We’ll catch you next week on the Neural Frontier 👋!

PS: If you’re the “friend” this mail was forwarded to, and you enjoyed it, hit the Subscribe button to see more content like this every week 🙂.